Glaeser participated on a SXSW panel, along with Michael Hendrix of the Manhattan Institute and Fort Worth Councilwoman Ann Zadeh, to discuss how big data and AI tools have the potential to transform policymaking. Undeniably, a major hurdle to policymaking is understanding the immediate impact of policy and crisis events.

Traditional social science and studies take time to develop and understand impact – and even then, a clear picture is often missed. Typical data sources are several years old, aggregated and often too broad in scope. All of these truly make it hard for local government, service providers and nonprofits to help populations in need.

Big data and AI tools have the potential to “now-cast” policy impact and, further, the impact of momentous events like Hurricane Harvey, according to a Kinder Institute study. The use-case covered by Glaeser analyzed how Google street-view can be used to predict income and crime down to a city block through a computer vision algorithm to show urban shifts. The highlight of the study is, urban change can be explored as it is happening rather than afterwards.

Using images to gauge change is not a new idea. Neither is using decades worth of data on Google. However, this type of study is a mammoth task to undertake if it relies on people collecting and scoring the images.

The novel idea Glaeser and his colleagues introduce allows a computer to analyze millions of pieces of information and calculate change. This technology can be transformative to how cities can respond to urban change, however, the panel warned, in order to adopt these technologies, governments must learn to transform themselves to be adaptive and nimble.

I would add to this discussion, not only do governments need to be more adaptive, but they need to have community partners to leverage this data. There are real privacy concerns for governments to collect big data. It is unreasonable for our government to have access to our information to potentially invade our private lives.

However, as Glaeser noted, “the point isn’t the technology; the point is creating great urban spaces.” Knowing this information is accumulated and exists, there should be a way to use this data when it fits best, without giving government individual data.

Eleven months after Hurricane Harvey, the Kinder Institute covered a particular use-case for big data – using social media and crowdsourced data to augment FEMA damage estimates. During this research, the Kinder Institute purchased and securely housed data. With the data, researchers created damage assessments from Harvey to showcase the potential for leveraging this data.

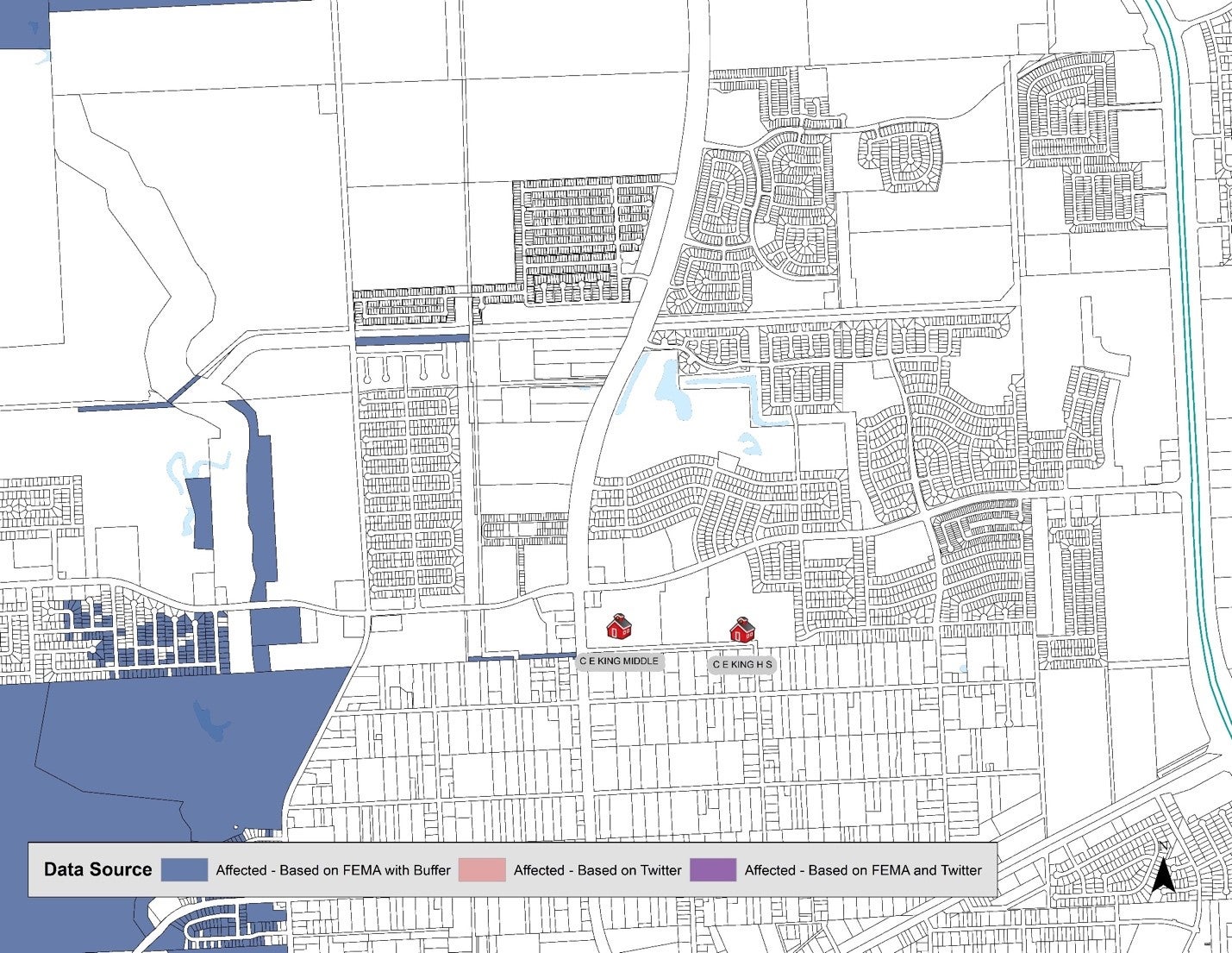

Traditional data sources show the area around Sheldon ISD's CE King High School show no damage or floodwaters from Hurricane Harvey. Map: Matthew Martinez

Traditional data sources show certain areas not being impacted by floodwaters, such as the area around Sheldon ISD’s CE King High School. However, the school and the area were significantly impacted.

CE King High School’s football stadium covered by floodwaters from Tropical Storm Harvey Tuesday, August 20, 2017. AP Photo/David J. Phillip

CE King High School Auditorium flooded by Hurricane Harvey waters.

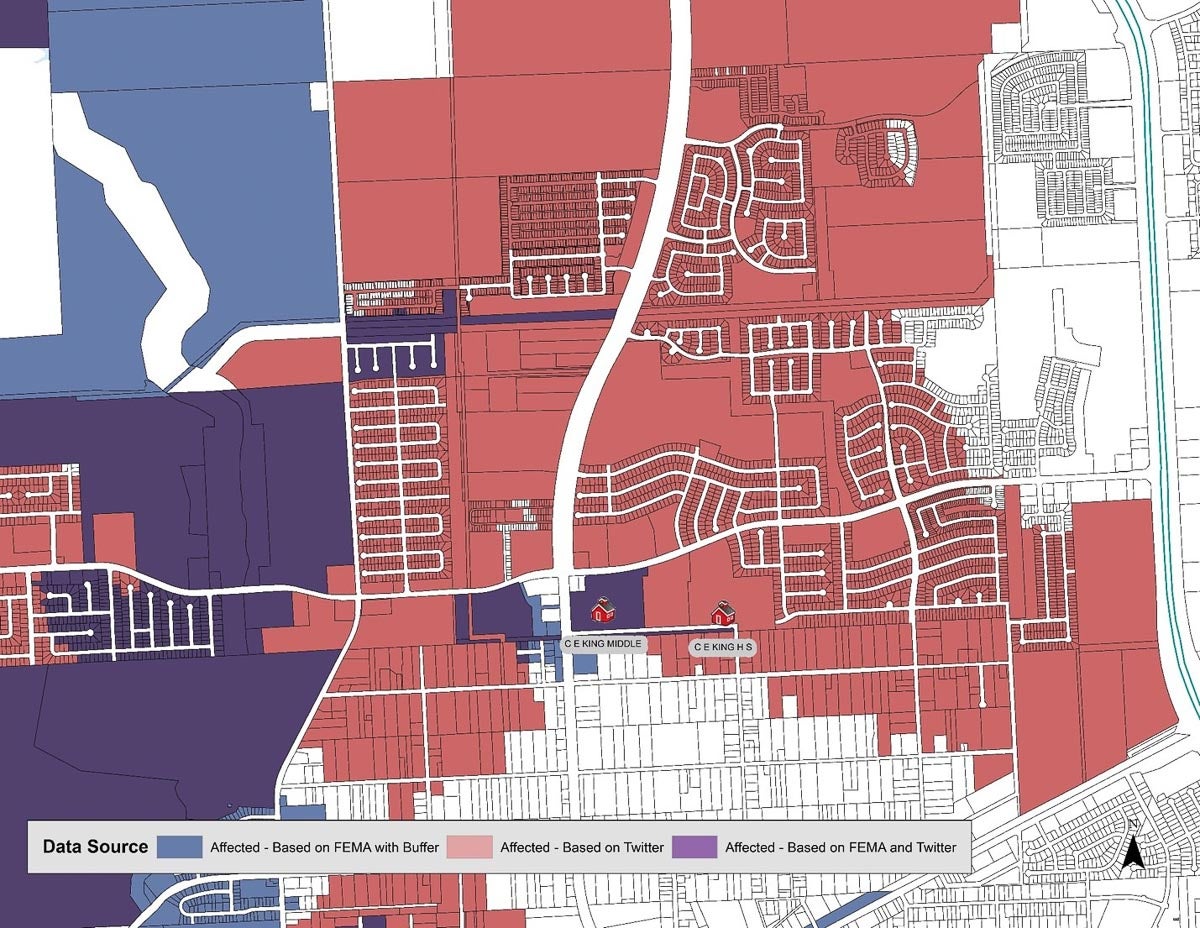

Leveraging data from Twitter would have created a more robust understanding of damage as the following image displays. The map uses the traditional FEMA estimates, buffered by 75 meters to increase robustness and the twitter-sourced damage estimates, buffered by 250 meters.

Traditional data sources show the area around Sheldon ISD's CE King High School show no damage or floodwaters from Hurricane Harvey. Map: Matthew Martinez

The Kinder report further goes on to highlight the potential for a “disaster data partnership” in the region. Indeed, there needs to be a protocol in place for the use cases we know how to operationalize and leave room to adapt to evolving situations.

The Houston area would benefit from creating data partnerships not only during disasters but wherever we can leverage big data tools. The structure of these systems could be simple and kept with the private corporations collecting and housing the data, research entities leveraging local talent and expertise, and government and other non-governmental organizations issuing services or humanitarian aid.

Houston’s population, geographical size and vulnerability to disasters compels the city to be innovative. Houston should be leading the way in integrating big data tools and AI.