In the immediate aftermath of Hurricane Harvey and the months following it, a multitude of organizations worked together, including governmental, non-profit and private entities. During the response and recovery phases these entities are responsible for often scarce yet vital humanitarian aid. Many critical decisions hinge on damage and needs assessments but these assessments can either be slow in being generated or miss populations.

While the Houston region has been diligently working to mitigate against impacts of disasters like Harvey, it has not always effectively leveraged key data available through social media and crowdsourcing platforms. Social media has been used as a tool to effectively push messages and information, but it has yet to be systematically used for information retrieval during a disaster.

There is a wealth of information on Facebook, Twitter and other social media and communications platforms. This data can provide a new level of understanding on the spatial distribution of damage, impact and, thus, needs, as well as a temporal understanding of movement throughout a disaster. While social media is generally not representative of the population at-large, it still provides useful, generalizable, information during emergency situations. Part of its usefulness may even be a response to overloaded emergency systems that push residents to vocalize their distress, or another’s distress, in an informal public forum.

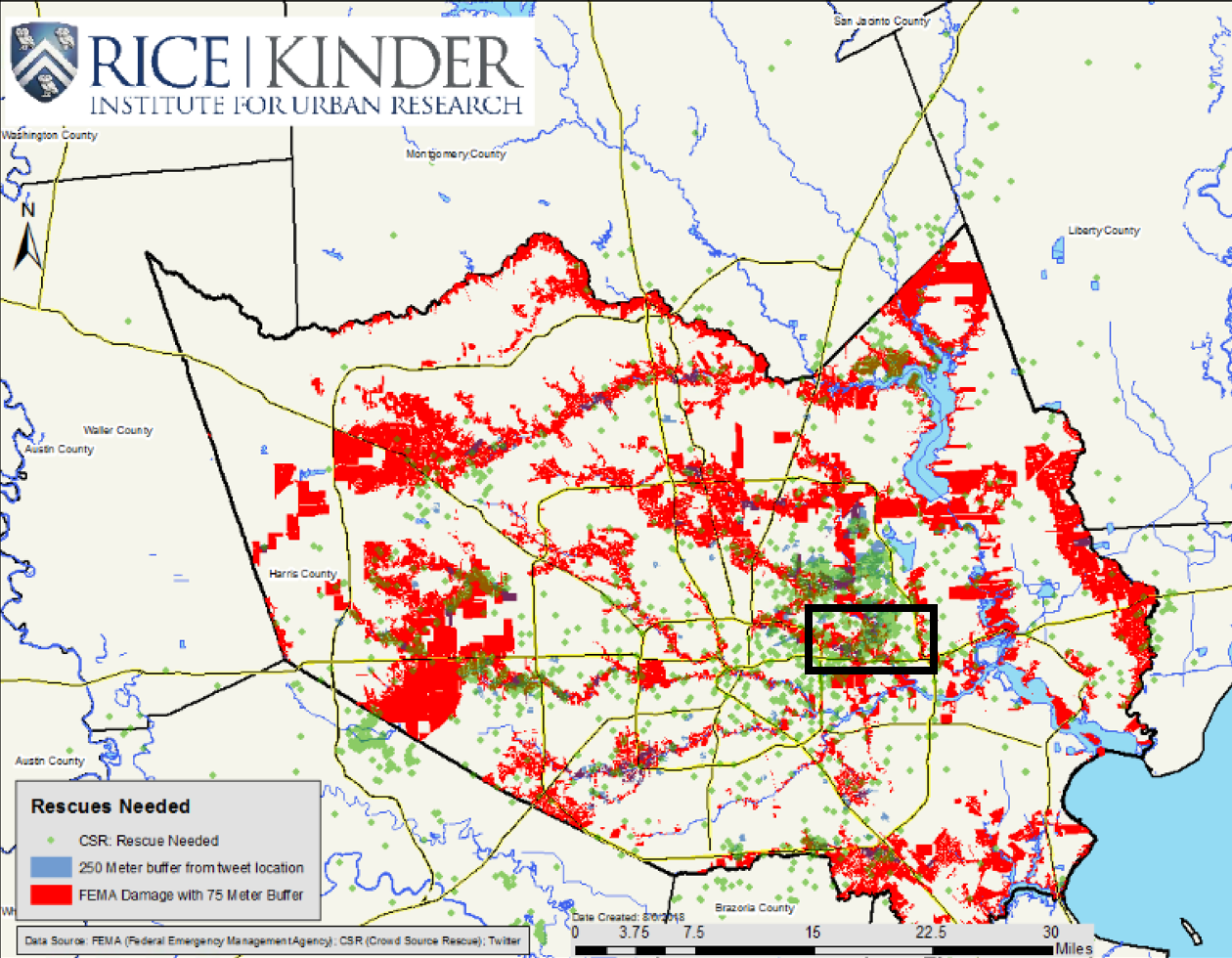

A new Kinder Institute report explores how the region could better leverage “big data” platforms like Twitter through a process known as crisis informatics, using information retrieval techniques to inform on a disaster. The study utilizes Twitter-sourced damage estimates and water rescue records from CrowdSource(CSR), a crowdsourced website set up during Harvey, to capture information about damaged structures. These results are in turn evaluated against FEMA damage estimates.

The study uses parcel-level data, and buffers estimates from the three datasets to provide a more robust picture. For example, Twitter estimates are geolocated to the centroid of a parcel and buffered by 250 meters, FEMA estimates were provided at the parcel-level and buffered by 75 meters and CSR estimates were provided with an estimated latitude and longitude and were buffered by 50 meters, with the assumption being that buildings side-by-side probably suffered similar levels of flooding.

While these new systems are not a replacement for traditional data sources, they can significantly augment initial models with real-time analysis. The study finds 46 percent of Twitter-sourced estimates and 50 percent of CSR-sourced estimates were not captured by FEMA. The fact that 50 percent of CSR-sourced estimates and 54 percent of Twitter-sourced estimates corroborate FEMA data yields validity to FEMA maps and augments the FEMA model where it falls short.

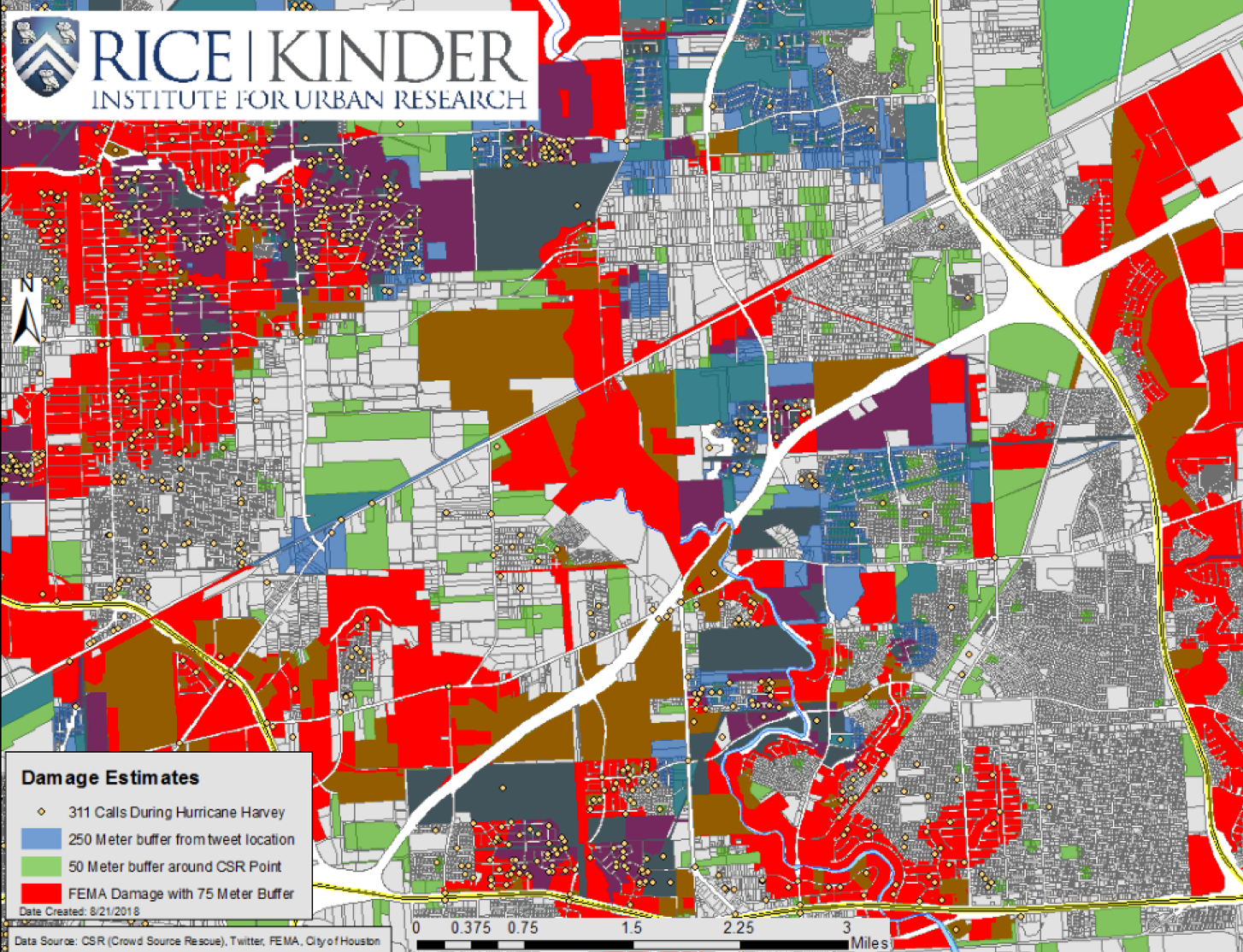

The figure below shows all three datasets together, displaying a more robust picture of damage. There are clear moments of corroboration between the datasets: purple indicating validation between Twitter and FEMA; brown-yellow, validation between CSR and FEMA and blue-green, validation between CSR and Twitter.

Map: Matthew Krause.

Map: Matthew Krause.

The area highlighted in the map shows the Highway 90 corridor in the eastern part of Houston. The area displayed significant activity from all three data sources, likely stemming from the flooding of Greens Bayou that runs vertically across the area.

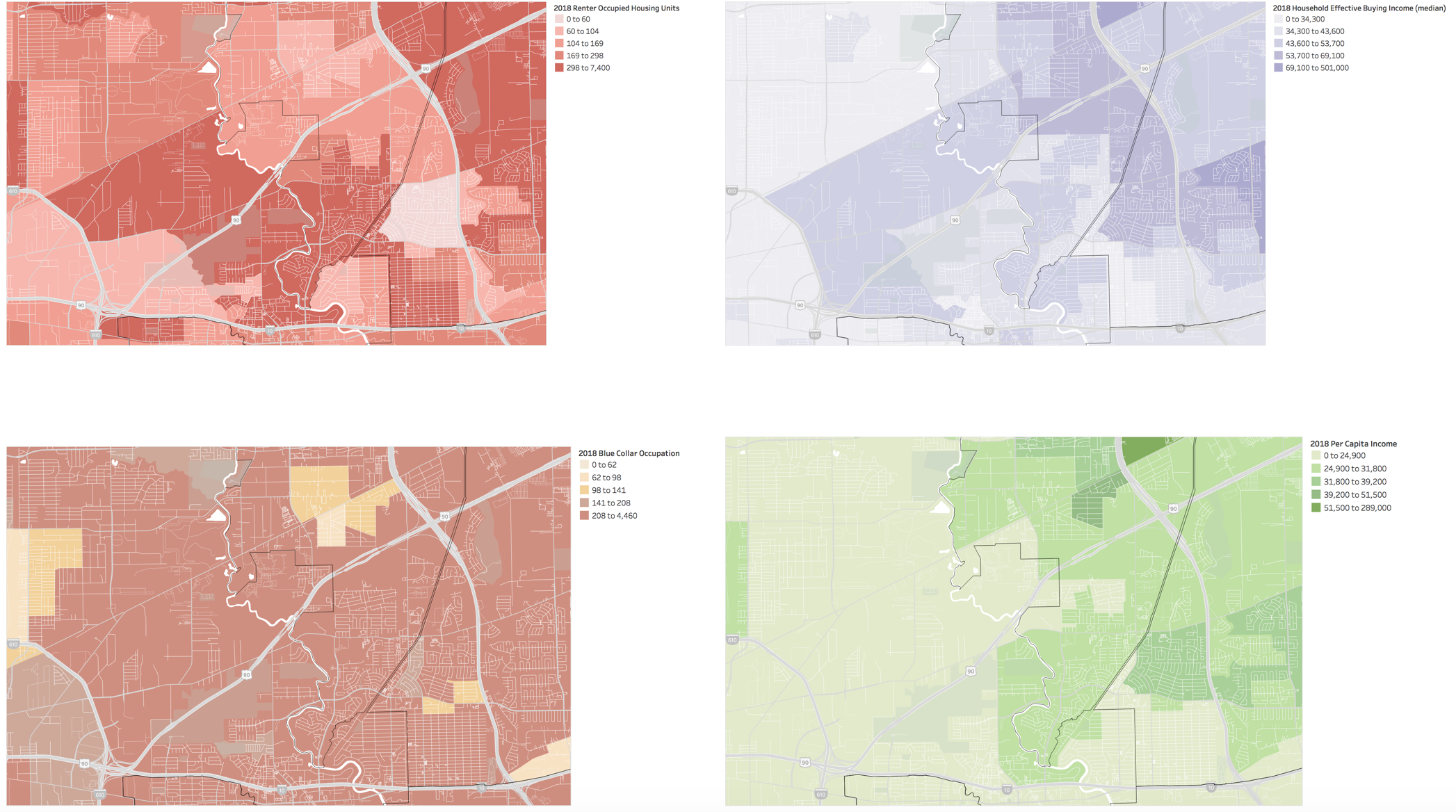

The figure above adds 311 calls from the City of Houston, validating the area communicated distress through multiple channels. Notably the area contains high rates of renter occupied housing units and blue-collar workers, as well as lower than average household effective buying income and per capita income.

Twitter, among other social media platforms, allows for immediate and informal online discussion. Cities should look to leverage these data sources more effectively in the future. While Twitter is not representative of the whole population, it provides important insights, particularly in emergency situations, perhaps as an alternative to overloaded emergency systems. The study does not advocate for replacing our current methods for assessing damage from disasters, but rather, demonstrates additional uses for such data.

The study only explores the potential to augment damage assessments but the potential to leverage this data to better inform disaster response and recovery is clear. Twitter, Facebook, Google --- data sources largely missing from official assessments, can represent people missing from them too.